The AI Infrastructure Stack: Comparing 21 Companies Riding the Same Wave

From EDA software to neocloud upstarts: Who actually has a moat, and who is just along for the ride.

The AI buildout is the largest capital cycle of our generation. Hundreds of billions of dollars a year now flow into the chips, the fabs, the tools that make the chips, the software that designs them, the optical fibers that connect them, and the warehouses that house them. The companies receiving that spend look nothing like each other.

At Value & Momentum Portfolio, we’ve been working through this landscape one company at a time. The 21 names below aren’t the entire AI infrastructure universe, but they’re the ones we’ve covered so far, and they’re broad enough to map the value chain. Full single-name write-ups are in the Substack archive.

They share one thread: each benefits from the AI buildout. Underneath, they could not be more different. Some sell the irreplaceable software that designs every advanced chip on earth. Others physically print transistors onto silicon, one atom at a time. Some rent the resulting compute by the hour. A few are 50-year-old titans with monopoly moats; others are former Bitcoin miners retrofitting warehouses to host GPUs.

And then there is NVIDIA: sitting at the center of the ecosystem, referenced by almost every other company on this list, sometimes as customer, sometimes as competitor, sometimes as both.

What follows maps these companies onto the value chain, compares their business models, weighs their moats, and places each on the spectrum from quality compounder to speculative bet. The goal isn’t to recommend any of them. It’s to clarify what you’re actually buying when you buy each one.

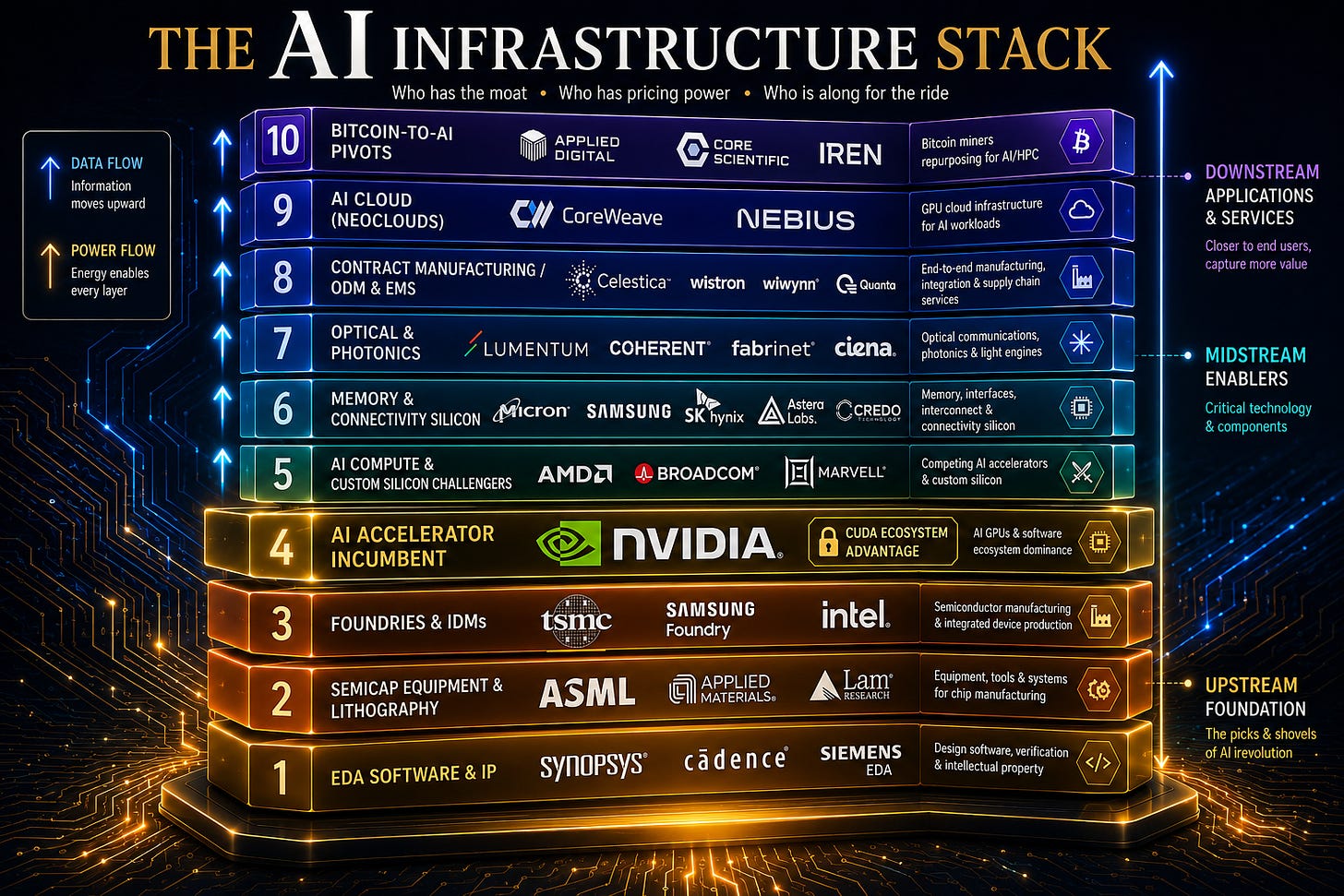

The AI Stack at a Glance

If you imagine the AI economy as a layered stack, these companies populate almost every level except the foundation models themselves. From the bottom up: EDA software designs the chips; equipment makers build the tools that fabricate them; foundries actually manufacture the silicon; chip designers create the products that get fabricated, with NVIDIA dominating that layer; memory, connectivity, and optical specialists complete the package; contract manufacturers assemble the boxes; and at the top, cloud operators rent out compute by the hour.

NVIDIA: The Center of Gravity

Before discussing any other company, it is worth establishing why NVIDIA deserves its own section. Of the twenty other companies in this analysis, almost every single one references NVIDIA in some way. Synopsys’s largest sophisticated investor of 2026 is NVIDIA, which took a 4.8 million share stake. TSMC fabricates every cutting-edge NVIDIA AI chip; Intel just received a $5 billion investment from NVIDIA and is competing with TSMC for its foundry business. Lumentum was hand-picked for a $2 billion direct investment as part of NVIDIA’s $4 billion “optics blitz.” CoreWeave is a deep strategic NVIDIA partner with NVIDIA holding an equity stake; Nebius is a launch partner for new NVIDIA platforms. The Bitcoin pivots (IREN, Applied Digital, Core Scientific) are racing to deploy NVIDIA GPUs by the thousand. AMD, Broadcom and Marvell define their entire competitive position relative to NVIDIA. Micron is the sole U.S. HBM supplier feeding NVIDIA’s AI platforms.

NVIDIA is not just one of the companies on this list, it is the gravitational center the others orbit. The CUDA software stack, two decades in the making, is the deepest software moat in semiconductors and arguably in all of computing. Every AI researcher and engineer trained on it; every framework optimizes for it. That is why even when Google’s Broadcom-fabricated TPUs are reportedly 40% cheaper to run, hyperscalers still buy NVIDIA in volume.

The fundamental performance remains exceptional. Q3 FY2026 (reported Nov 19, 2025) delivered $57 billion of revenue, up 62% YoY, with EPS up 60%. The company beat estimates again. Forward P/E sits at a not-unreasonable 38.7x with a forward PEG of 1.02x. By traditional growth-investing metrics, NVIDIA is not in bubble territory.

What makes the executive summary contrarian is the change in second derivatives. Growth is decelerating from the 200%+ rates of early 2024 down to 56-69% across the recent quarters, still extraordinary, but the slope is flattening.

The competitive picture also explains why so many of the other 20 companies on this list are doing as well as they are. Broadcom’s custom AI chips for Google are reportedly 40% cheaper to run; AMD’s MI308 is ramping; Amazon, Google and Microsoft are all developing in-house AI silicon. Marvell’s custom ASIC business with Amazon and Microsoft, ramping toward $2B by 2028, exists because hyperscalers want alternatives to NVIDIA. The export controls cutting NVIDIA out of China have created opportunities for domestic Chinese competitors (Huawei, Moore Threads). Every one of these challenger stories is, at some level, a bet that NVIDIA’s near-monopoly share of the AI accelerator market gradually erodes.

The bull case is straightforward: NVIDIA still owns roughly 80% of the AI accelerator market, the CUDA moat is genuinely durable, the hyperscalers’ in-house chips are years away from displacing meaningful volume, and the company’s growth at this scale remains unprecedented in the history of semiconductors. The bear case is that the easy money has been made, valuation is stretched against decelerating growth, and the universal Wall Street consensus, 50 “Buy” actions since September with no downgrades, has itself become a contrarian red flag.

The Software That Designs the Chips: Synopsys

Before any AI chip can be fabricated, it has to be designed, and chip design today is unthinkable without Electronic Design Automation (EDA) software. Synopsys, together with its long-time rival Cadence, forms a duopoly that supplies the toolchain used by virtually every fabless and integrated chipmaker on earth. NVIDIA, AMD, Apple, Broadcom, Marvell, even TSMC itself for its IP work, all run on Synopsys software. The acquisition of Ansys, which closed in January 2025, extended that grip from silicon-level design into system-level simulation across automotive, aerospace and consumer electronics.

Synopsys’s moat is arguably the strongest of any company in this entire group, with the sole exception of ASML. Chip companies do not switch EDA vendors casually: design flows are deeply embedded, IP libraries are licensed under multi-year contracts, and engineering teams have spent decades on the same toolset. Switching costs are measured in years and tens of millions of dollars. The result is a record $11 billion backlog and a 73.5% gross margin business that compounds quietly through cycles.

Synopsys is a textbook case of a high-quality business going through a complex post-acquisition phase. Margins have compressed from a pre-Ansys ~23% to ~13% as integration costs flow through and Ansys’s lower-margin profile dilutes the mix. Q1 2026 revenue grew 65.6% YoY to $2.4B as the deal scales, but management cut full-year GAAP EPS guidance, and the market punished it.

The signals from sophisticated investors are unambiguous, however: NVIDIA took a 4.8M share stake in February 2026, Elliott Management disclosed a multibillion-dollar position in March, and the company itself authorized a $250M accelerated buyback. When the largest customer in the chip industry and one of the most successful activist investors in the world both buy at the same time, that is information.

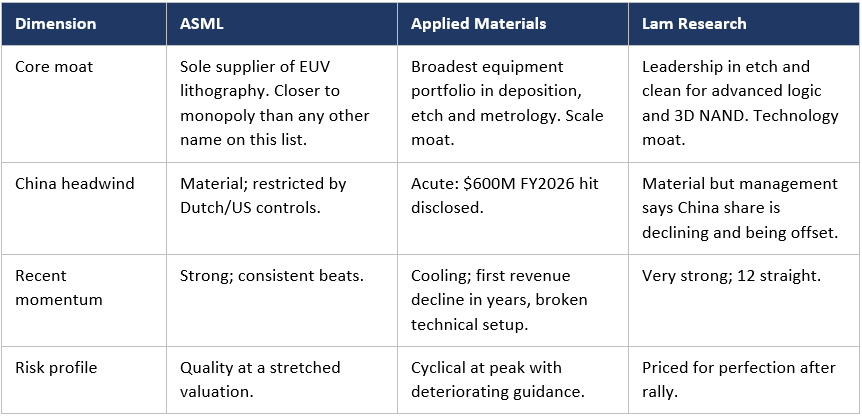

The Picks-and-Shovels Layer: Semiconductor Equipment

After EDA software designs a chip, the design has to be physically built, and the most defensive position in the chipmaking process belongs to the three companies that build the machines used to make every advanced chip on earth: ASML, Applied Materials and Lam Research. None of them sell directly to AI customers, yet none of the AI buildout happens without them.

ASML: The unrivalled monopoly

ASML occupies a position that genuinely has no parallel in the broader technology industry. It is the sole producer of EUV (Extreme Ultraviolet) lithography systems, the only machines capable of patterning the most advanced logic and memory nodes. There is no second source. Building a leading-edge fab without ASML is, today, simply impossible.

ASML is also the most diversified of the three by geography. Its installed base across Asia, Europe and the United States generates resilient service revenue that cushions the cyclicality of new tool sales. The company’s main vulnerability is geopolitical: its ability to ship to China is constrained by Dutch and U.S. export controls, and any further tightening or, conversely, any retaliation from Beijing, hits the bottom line directly.

Applied Materials and Lam Research: The deposition-and-etch duopoly

Applied Materials and Lam Research are best understood as a duopoly that complements ASML rather than competes with it. Where ASML prints patterns, AMAT and LRCX deposit thin films, etch them away with atomic precision, clean the wafers and inspect the results. They are joined at the hip with the same customers (TSMC, Samsung, Intel, the memory makers) and exposed to the same cycles.

Despite their structural similarities, the two are currently telling investors very different stories. Lam Research has just delivered its 12th consecutive earnings beat, raised guidance and is in a clear uptrend. Applied Materials, by contrast, became the cautionary tale of late 2025: it posted its first quarterly revenue decline in years, disclosed that the BIS Affiliates Rule will cost roughly $600M of FY2026 revenue, and saw its stock rejected sharply at $276. The divergence illustrates an important point: even within the same oligopoly, exposure to specific customers and product mixes can produce very different outcomes when geopolitics intervenes.

How the three Compare

The Foundries: TSMC and Intel

Equipment is sold to the foundries, the actual manufacturers that turn silicon wafers into finished chips. Two very different companies in this group illustrate the chasm between dominance and aspiration in this most capital-intensive of all industries.

TSMC: The foundation of the AI economy

It is hard to overstate TSMC’s centrality. Every cutting-edge NVIDIA AI chip is fabricated by TSMC. More than half of TSMC’s 2nm capacity through 2026 is reserved for Apple. It is the only customer signed up for the upcoming A16 process node. AMD, Broadcom, Marvell, even Intel for its newer products, all rely on TSMC for their most advanced silicon. The company is, in a very literal sense, the single point of failure for the entire AI hardware buildout.

The risks are well known and largely geopolitical. TSMC sits in Taiwan, ninety miles from a country that has explicitly stated its intention to bring the island under its control. Any meaningful escalation in cross-strait tensions hits TSMC directly, and through TSMC it hits every customer on this page, NVIDIA most of all. The U.S. fab buildout is, in effect, a strategic insurance policy for both TSMC and the broader Western technology industry.

Intel: The foundry challenger

Intel is the inverse case: a former monopolist now trying to claw its way back to relevance. Where TSMC is universally bullish and operationally excellent, Intel is a turnaround story with execution risk on every line of the income statement. Revenue is essentially flat in the $12.7-14.3B range; Q3 2025 delivered a +675% earnings surprise (against extremely depressed estimates); 2024 was, in the company’s own framing, brutal.

Two things make Intel worth watching. First, the validation: NVIDIA invested $5 billion in September 2025 (214.8M shares at $23.28), SoftBank announced a foundry deal, and the new CEO Lip-Bu Tan brought a deep semiconductor industry pedigree to the turnaround. Second, the technology: 18A process technology launched at CES 2026, with “Panther Lake” chips shipping and the CEO claiming the program over-delivered on its timeline.

The bear case is that Intel is competing with TSMC, a company with a decade-long head start, with a thinner margin for error than its balance sheet can absorb. The December 2025 news that NVIDIA reportedly halted testing of Intel’s 18A process for its own chips was a serious setback for foundry credibility, and a reminder that the same NVIDIA whose $5B investment validated Intel can also withdraw that validation. Until Intel demonstrates that 18A produces leading-edge silicon at TSMC-comparable yields and at scale, the foundry pivot remains a hope rather than a thesis.

TSMC vs Intel: The same business, different planets

Bottom line: TSMC and Intel both operate fabs but they are not in the same league today. TSMC is a quasi-monopoly running at full speed; Intel is a turnaround story whose entire foundry thesis hangs on whether 18A actually works in production. A diversified portfolio probably wants the former; the latter is a binary bet on execution.

The Challengers: AMD, Broadcom and Marvell

The three companies in this section all design AI silicon but are not NVIDIA, and that is essentially their entire investment thesis. Each of them attacks NVIDIA’s dominance from a different angle. AMD competes head-on with merchant GPUs. Broadcom and Marvell take the indirect path: they build custom ASICs for the very hyperscalers that buy NVIDIA chips, helping those customers reduce dependence on Jensen Huang’s roadmap and pricing power. The decelerating growth at NVIDIA is, in part, the success of these challengers showing up in someone else’s income statement.

AMD: The merchant-silicon challenger

AMD’s bull case is that it represents the only credible alternative to NVIDIA’s roughly 80% share of the AI accelerator market. The company has guided toward $100 billion in annual data-center chip revenue within five years, is targeting 55–58% gross margins and >35% revenue CAGR, and has accumulated impressive partnerships with the Department of Energy, OpenAI, Microsoft and HPE. It is delivering: revenue grew 36% YoY in Q3 2025, beating estimates, and the data-center segment is exploding. The risk is execution and valuation: at 49x forward P/E, the market is pricing in flawless delivery of an aggressive annual product cadence against a much larger, better-resourced incumbent. AMD’s gap with NVIDIA is essentially the CUDA software stack; closing it is a software problem at least as much as a silicon problem.

Broadcom: The custom-silicon partner to hyperscalers

Broadcom is, in some ways, the most financially imposing entity in the entire group. Its $73 billion AI backlog, $6 billion of AI revenue in a single quarter (Q4), and 50% claim on Samsung’s HBM output for Google TPUs make it arguably the second-most-important AI silicon company in the world. The widely-reported claim that Google TPUs (which Broadcom co-designs) are 40% cheaper to run than NVIDIA’s GPUs is a major reason NVIDIA’s growth is decelerating. But the December 2025 selloff, a 15% post-earnings drop despite beating estimates, illustrates the danger of trading at 100+ trailing P/E when management warns about margin pressure. Broadcom is also more concentrated: it relies heavily on three or four hyperscaler relationships (Google, Meta, OpenAI), whereas AMD has a broader OEM/ODM/cloud customer base.

Marvell: The smaller custom-silicon and connectivity specialist

Marvell sits in a position that overlaps significantly with Broadcom, both build custom ASICs for hyperscalers, both are heavily exposed to AI data-center demand, and the two are explicitly competing for the same accounts. Marvell’s data-center revenue is now 72% of total revenue (up from 40% in FY2024), and the custom ASIC business with Amazon and Microsoft is ramping toward $2B by 2028. Q3 FY2026 delivered $0.76 EPS (+77% YoY) on $2.08B of revenue (+37% YoY), and recent quarters have shown EPS growth pattern of 5% → 123% → 158% → 77%.

Marvell has been actively reshaping itself for the AI era: it sold its automotive unit for $2.5B to focus entirely on data-center business, announced a $5B share buyback (nearly 10% of market cap), and acquired Celestial AI for $3.25B to add silicon photonics capabilities, the same technology layer that Lumentum dominates from a different angle. At $87 per share, having traded as high as $125 in early 2025 and as low as $47 in April 2025, Marvell is a high-beta proxy for hyperscaler AI capex and its own ability to win design slots that Broadcom also wants. The competitive intensity between Marvell and Broadcom for Microsoft custom silicon is one of the more interesting head-to-head battles in the entire AI hardware ecosystem.

Three challengers, one incumbent

AMD is the merchant-silicon challenger to NVIDIA. Broadcom and Marvell are the custom-silicon partners to hyperscalers, helping them build alternatives to NVIDIA, with Broadcom being the larger, richer-margin incumbent and Marvell the more aggressive, lower-priced challenger. All three depend heavily on hyperscaler capex, and all three are fundamentally bets on the gradual erosion of NVIDIA’s near-monopoly position.

The Plumbing: Memory and Connectivity Silicon

Around every GPU there is a constellation of supporting chips, memory, retimers, controllers, sensors. Four very different companies in this group illustrate just how much variance exists within “specialty semiconductors”.

Micron: The only American memory maker

Micron is the most direct beneficiary of the AI memory supercycle of any company in this list other than NVIDIA itself. AI servers consume 8–12x more DRAM than traditional servers, and high-bandwidth memory (HBM) is the most constrained component in the entire stack after GPUs themselves. Micron is one of only three global HBM suppliers, the sole U.S.-based major memory manufacturer, and the sole U.S. HBM supplier feeding NVIDIA’s AI platforms, a status that gives it a unique geopolitical moat as Washington and Beijing decouple their semiconductor supply chains.

The numbers are extraordinary: EPS grew from $0.42 in Q2 2024 to $3.03 in Q4 2025 (a +157% YoY result), and full-year revenue rose roughly 84% to $37.1B. The Cloud Memory unit alone went from $3.79B to $13.52B, a +257% jump. The risks are equally stark: the Chinese CAC ban has effectively closed that market, and the stock’s rally means it is priced for the cycle to continue indefinitely. Memory is historically the most cyclical segment in semiconductors; nothing in the data we have suggests this cycle has been repealed.

Astera Labs: The AI-native connectivity pure-play

Astera Labs is a much smaller, much newer story. It IPO’d in March 2024 and designs PCIe/CXL retimers, CXL memory controllers, fabric switches and Ethernet modules, the chips that actually move data between GPUs, CPUs and memory inside an AI server. Revenue went from $65M in Q1 2024 to $230M in Q3 2025, more than 3x in 18 months. With a $28B market cap, it trades at extreme multiples that have already produced a 48% drawdown from the September peak.

Astera’s moat is narrower than Micron’s: it lives or dies by hyperscaler design wins and faces competition from larger, better-funded rivals. But its sole focus on AI infrastructure makes it the closest thing on this list to a pure-play option on the AI-server architecture.

Microchip and Synaptics: The cyclical and the turnaround

Microchip and Synaptics sit at the opposite end of the spectrum from Micron and Astera, neither is an AI darling, and both have been through significant pain. Microchip is in the middle of a brutal cyclical correction in industrial and automotive end markets: revenue fell 27% YoY in Q4 FY2025, net margins collapsed from 23.7% to 1.2%, the company closed a fab and brought its 69-year-old founder back as CEO. Recovery is coming, book-to-bill has crossed 1.0x for the first time in three years, but the timing is uncertain and shareholders may be early.

Synaptics is a more interesting story. After acquiring Broadcom’s wireless IoT assets in early 2025, the company is repositioning from a legacy PC-parts vendor to an Edge AI platform built around its proprietary Astra processors. Tt is one of the few names in this group that could plausibly be described as cheap, although negative free cash flow and a -7% net margin remain valid concerns.

Optical: Lumentum’s Once-in-a-Generation Moment

Lumentum produces lasers and optical transceivers that move data inside AI clusters at speeds that copper can no longer support. It would normally be considered a quietly cyclical components company; in 2025 it became one of the most extraordinary stories on this list.

The fundamental story is genuine: Q2 FY2026 revenue hit $665.5M (+65% YoY), Q3 guidance implies +85% YoY, operating margins surged 1,730 basis points, and EPS nearly tripled. The company’s Indium Phosphide wafer fab capacity is completely sold out through 2027, Lumentum is currently under-shipping demand by 25–30%. On March 2, 2026, NVIDIA announced a $2 billion direct investment as part of a broader $4B “optics blitz,” effectively anointing Lumentum as a critical strategic supplier. (Notably, the same NVIDIA initiative is one of the reasons Marvell paid $3.25B for Celestial AI earlier; the optics layer has become a strategic battleground all of its own.)

Contract Manufacturing: Celestica’s Quiet 28x

Celestica is the only Electronics Manufacturing Services (EMS) and ODM company in the group. It does not design chips; it designs and builds the servers, switches and storage systems that hyperscalers fill their data centers with. Hardware Platform Solutions (HPS), its high-margin ODM business, grew 63% in 2024 and is now the company’s primary growth engine.

From a fundamentals perspective Celestica is genuinely impressive: 13 consecutive earnings beats, Q4 2025 EPS up 70% YoY, FY2026 guidance raised to $16-17B in revenue, a healthy debt ratio and $299M of quarterly free cash flow. The stock has gone from ~$10 in early 2023 to $363 in November 2025, a roughly 28x move.

The concerns are familiar by now: a 53x P/E is rich for a manufacturing business; the top two customers represent 39% of revenue (a concentration that is arguably worse than Astera’s or Lumentum’s); and the early-February 2026 wave of insider selling, roughly $150M in a single week, strongly suggests management feels the stock is full. Celestica is a high-quality operator at the wrong end of a parabolic move.

The Neoclouds: CoreWeave and Nebius

CoreWeave and Nebius are direct competitors in what the industry now calls “neocloud”, specialized, GPU-first cloud platforms that compete with AWS, Azure and GCP for AI workloads. They both rent NVIDIA GPUs by the hour to companies training and running large models, which makes them, in effect, a leveraged way to express the NVIDIA thesis without owning NVIDIA itself. Both have sold-out capacity, both depend on close NVIDIA relationships (NVIDIA holds an equity stake in CoreWeave), and both are burning enormous amounts of capital to scale data-center footprints.

Their differences also matter. CoreWeave is the larger and more concentrated player: a $38B market cap, a $55B+ revenue backlog, and a customer list dominated by OpenAI, Microsoft and Meta. Nebius is smaller (about $22B market cap) but more globally diversified, with capacity in the U.S., Europe and the Middle East and a more varied customer base targeting the AI mid-market. CoreWeave is essentially a U.S. infrastructure-as-a-service play; Nebius is closer to a full-stack AI cloud, bundling developer tools, an AI Studio, education (TripleTen) and even autonomous driving (Avride).

The crucial point is that the demand is not in question, both companies say they have “sold out everything we built.” The bottleneck is execution: power, land, GPU allocation, debt service. The neocloud thesis is, fundamentally, a bet that physical infrastructure can be built fast enough to monetize the multi-billion-dollar contracts already signed, and a bet that NVIDIA continues to allocate them GPU shipments preferentially over the hyperscalers themselves.

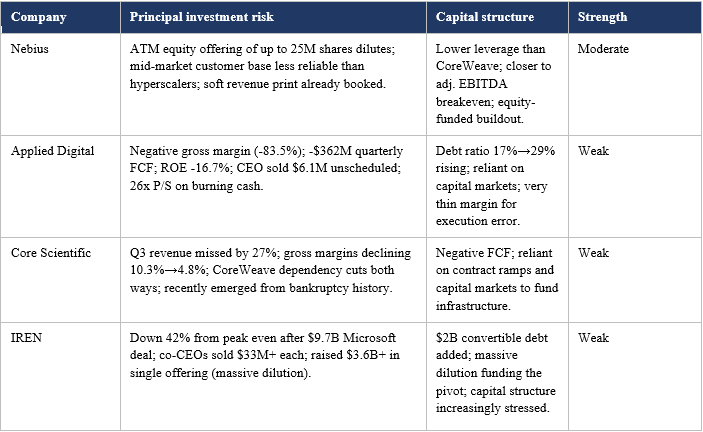

The Bitcoin-to-AI Pivot Trade: Applied Digital, Core Scientific, IREN

Three companies in this group share a remarkable origin story: they were all built to mine Bitcoin and are now trying to convert their power, real estate and operating expertise into a second life as AI infrastructure landlords. Applied Digital, Core Scientific and IREN are essentially executing the same strategic pivot, with different timing, partners and balance-sheet structures.

The bull case is genuinely compelling. Power, especially gigawatt-scale, grid-connected, low-cost power, has become the binding constraint on AI infrastructure growth. These companies already have it. Applied Digital secured two 15-year leases totaling 250 MW for its Polaris Forge campus and signed a $1.5B+ partnership with Babcock & Wilcox to deliver 1 GW. Core Scientific has 1,317 MW of contracted capacity and a deep partnership with CoreWeave (whose own guidance cut, ironically, was largely caused by Core Scientific’s delays). IREN signed a $9.7B AI cloud services agreement with Microsoft in November 2025 and is expanding its NVIDIA GPU fleet from 1,900 to roughly 10,900 units.

The bear case is even more compelling, and it is essentially the same story for all three. They are unprofitable and burning cash. They are diluting shareholders aggressively to fund the buildout, IREN raised $3.6B+ in a single offering. They are dependent on a small number of customers (often a single one). They are simultaneously running a volatile Bitcoin business while ramping an unproven AI business. And insiders are selling: IREN’s co-CEOs each sold $33M+ in September; Applied Digital’s CEO sold $6.1M in unscheduled transactions; Core Scientific’s earnings have missed estimates by enormous margins.

Applied Digital’s market cap has grown 16x to $8.2B in six months on roughly $230M of annualized revenue, a 26x P/S for a company with negative gross margins and a -$362M quarterly free cash flow. Core Scientific missed Q3 revenue estimates by 27%. IREN is down 42% from its peak even after announcing the Microsoft contract. These are not investments in the conventional sense; they are long-dated options on continued NVIDIA GPU allocations to companies that are not their largest hyperscaler customers.

Deep Frameworks: Moats, Pricing Power, Cyclicality and Bottlenecks

It is one thing to walk through 21 companies layer by layer; it is another to compare them on the dimensions that actually determine long-term returns. The four frameworks in this section, the specific source of each company’s moat, who has the strongest moat, who has genuine pricing power, who is more cyclical than they appear, and who benefits from the physical bottlenecks gating the entire AI buildout, cut horizontally across the layers and reveal patterns that the layer-by-layer view obscures.

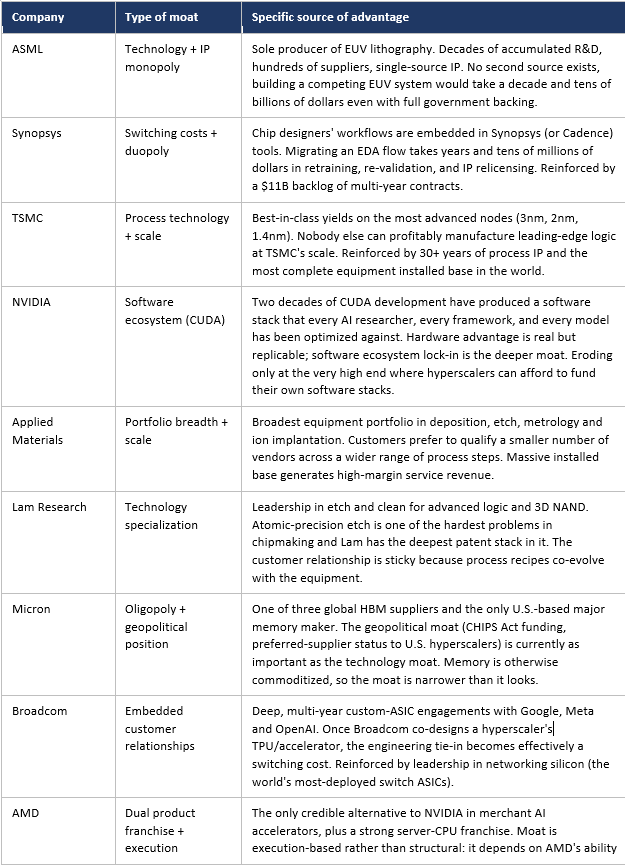

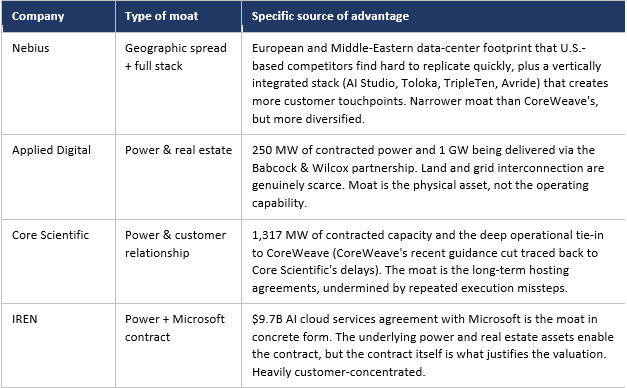

The Moat of Each Company

Who Has the Strongest Moat?

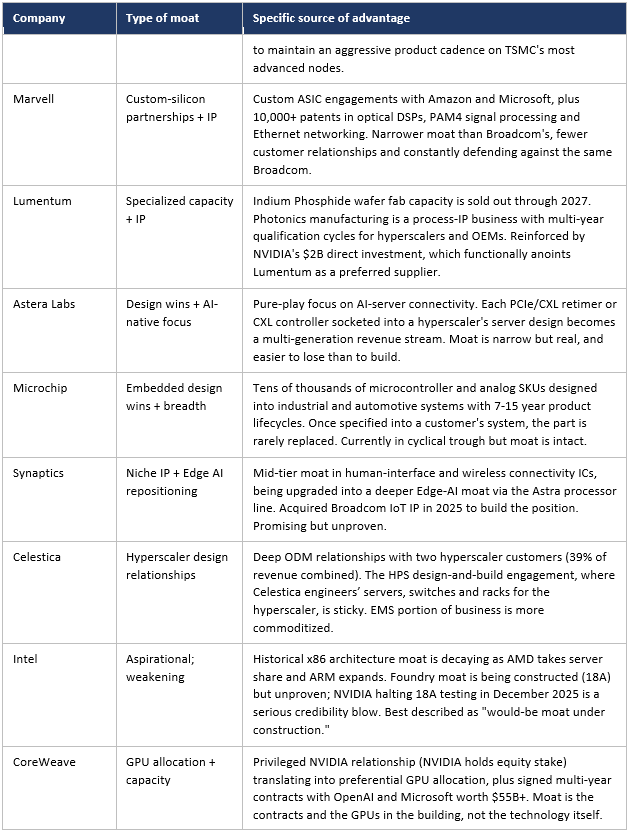

Moat strength is best assessed along two axes: how hard it would be for a competitor to replicate (replication cost) and how stable the moat is against forces actively trying to erode it (durability). On both axes, the rankings are remarkably consistent, and they place a small number of companies far above the rest.

ASML is the clearest #1. There is no second-source EUV vendor, and there will not be one in the next decade. The replication cost is effectively infinite at current ROI requirements: it would take a state-funded program (China is trying) the better part of fifteen years to approach what ASML has built, and even then patent and trade-secret enforcement would create permanent friction. The only material risk is geopolitical (export controls limiting addressable market), not competitive.

Synopsys is #2 by switching costs. Together with Cadence, Synopsys forms a duopoly that controls the workflow of every chip designer. Migrating an EDA flow is a multi-year, multi-million-dollar undertaking that risks slipping critical product schedules. Customers stay because changing is more expensive than tolerating any plausible price increase. The Ansys acquisition extends the same logic into system-level simulation. The $11B backlog is the moat made visible.

TSMC is #3 by capability. Every cutting-edge AI chip is fabricated by TSMC because nobody else can do it at the required yield and scale. Samsung is competitive at older nodes; Intel is trying with 18A. But for 3nm/2nm/1.4nm production at hundreds of thousands of wafers per quarter, TSMC stands alone. The moat’s main vulnerability is not commercial but military: a cross-strait crisis would damage everyone on this list, but it would damage TSMC most.

NVIDIA is #4, with an asterisk. The CUDA moat is genuinely deep and would take a competitor 5-10 years to replicate from scratch. But unlike ASML, Synopsys or TSMC, NVIDIA’s largest customers are actively, deliberately funding the construction of alternatives. Google’s TPUs (Broadcom-fabbed) are reportedly 40% cheaper to run; Amazon and Microsoft are commissioning their own custom silicon from Marvell and Broadcom; the Chinese AI ecosystem is being forced by export controls to build a domestic alternative. NVIDIA’s moat is a Tier-1 moat under sustained, well-funded attack, the only such case on this list.

After these four, the next tier is occupied by the WFE duopoly (Applied Materials, Lam Research), Micron in HBM specifically, and Broadcom in custom ASICs. Their moats are real but narrower in scope or more contestable. Below that come execution-based and contract-based moats (AMD, Marvell, Lumentum, Celestica, Astera, the neoclouds), which can be lost as well as won. At the bottom are Intel’s foundry aspirations and the Bitcoin pivots’ physical-asset positions, which are not yet moats so much as raw materials from which a moat might one day be assembled.

Who Has Pricing Power?

Pricing power is the practical expression of a moat. A company with genuine pricing power can pass cost increases through to customers and even raise prices in real terms, expanding margins through cycles. A company without it gets squeezed when its inputs get more expensive or its customers get more concentrated. The 21 companies span the full spectrum.

Strong pricing power: ASML can essentially name its price for EUV systems; the only restraint is its own concern about being seen as a monopolist. Synopsys has demonstrated price escalators in multi-year contracts that customers absorb without negotiation. TSMC has raised wafer prices repeatedly through the AI cycle, with 5nm and 3nm prices rising 8-15% per generation, and customers have paid because there is no alternative. NVIDIA’s H100 and Blackwell pricing has moved largely upward despite explicit complaints from hyperscalers; the fact that hyperscalers are now paying Broadcom and Marvell for alternatives is itself evidence of how much pricing power NVIDIA has been exercising.

Moderate pricing power: Applied Materials and Lam Research can price tools at premium levels but face customer pushback when capex budgets tighten. Micron has genuine pricing power in HBM specifically (sold-out, allocated capacity) but is a price-taker in commodity DRAM and NAND, which is why memory remains structurally cyclical despite the AI overlay. Broadcom can extract premium pricing from custom-ASIC engagements but only because the alternative for the customer (a multi-year internal silicon program) is even more expensive.

Weak pricing power: AMD competes directly with NVIDIA on price-performance and has limited room to raise prices without losing share. Marvell, fighting Broadcom for the same hyperscaler accounts, is the more aggressive (i.e. lower-margin) of the two precisely because it is the challenger. Lumentum has pricing power right now (capacity is sold out), but the moment InP wafer capacity expands, that pricing power compresses; this is a duration-of-shortage trade, not a structural pricing-power story. Astera is similar.

Essentially zero pricing power: Celestica is a contract manufacturer; its margins are negotiated annually with hyperscaler customers who hold all the leverage. The neoclouds (CoreWeave, Nebius) sell GPU compute on multi-year contracts at competitive market rates, not premium pricing, their growth comes from volume, not price. The Bitcoin pivots (Applied Digital, Core Scientific, IREN) are even more constrained: they are essentially leasing power and floor space, often to a single customer, in long-term contracts where the customer dictates terms. Intel, in its current state, is offering foundry pricing concessions to attract initial customers; pricing power on the foundry side is a future aspiration, not a current reality.

The pattern is clear: pricing power tracks moat strength almost perfectly. The companies with structural monopolies or duopolies (ASML, Synopsys, TSMC) have it; the companies with contract-based positions and physical-asset moats do not. Investors should be skeptical of any thesis that depends on a Tier 4 or Tier 5 moat company “raising prices as demand grows.” That is not how those businesses work.

Who Is More Cyclical Than They Look?

Almost every company on this list will tell investors that AI has changed their cyclical profile permanently. This is partially true and partially the marketing version of “this time is different.” The honest answer is that some of these businesses are genuinely structurally less cyclical than they used to be, while others remain as cyclical as ever, with the cycle simply currently in their favor.

A useful sanity check: when AI capex eventually slows, which it will, the equipment makers and EDA software companies will see the smallest revenue declines. Memory and the merchant chip designers will see the largest. The neoclouds and Bitcoin pivots will face existential questions about contract enforcement, dilution, and survival. This ranking is the inverse of the rally-stage stock performance, the most cyclical names have outperformed the most by far in 2024-25, and would underperform the most in a downturn.

Financial Quality and Investment Risk

Moats and bottlenecks describe a company’s competitive position; financial quality describes how well that position converts into shareholder returns. Two companies can sit in the same industry layer with comparable moats and produce wildly different financial outcomes depending on how efficiently they deploy capital, how cleanly cash flows through the income statement, whether margins are expanding or compressing, and how much debt they carry. The five sub-sections below address these questions in order, working from the most fundamental measure of economic value (return on invested capital) outward to the practical questions of what could go wrong (investment risks and capital structure).

One caveat is worth stating up front: the executive summaries this article synthesizes do not provide identical disclosure across all 21 companies. Where specific numbers are available, the analysis cites them; where they are not, the analysis reasons from the business model and the disclosures that are available. The relative rankings are robust even where exact figures are not.

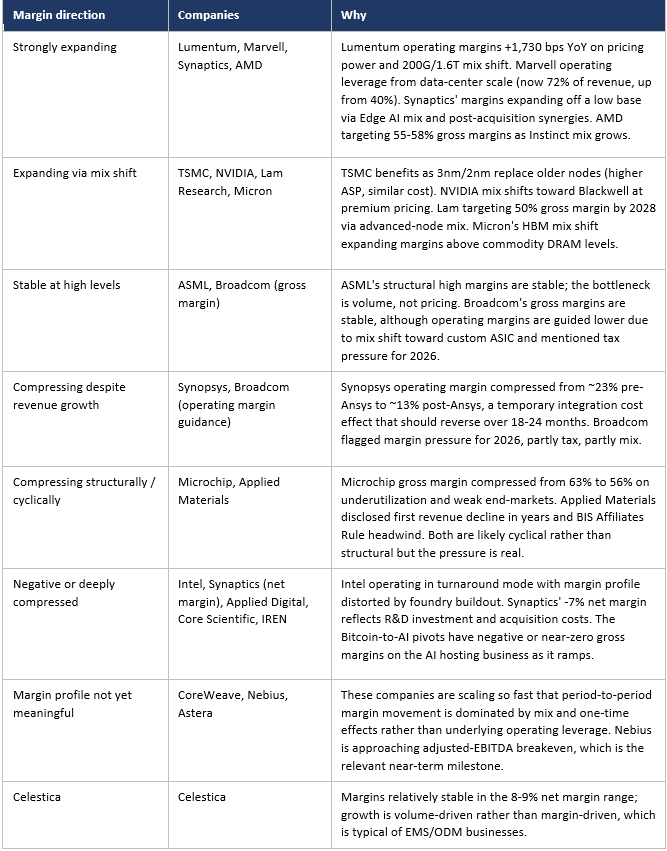

Which Companies Have Expanding Margins, and Why?

Margin direction is one of the most powerful signals in equity analysis. Expanding margins typically reflect some combination of pricing power, scale economies, mix shift to higher-value products, or cost discipline. Compressing margins typically reflect competitive intensity, mix shift to lower-value products, integration costs, or scale issues. The 21 companies show a wide range of margin trajectories.

Three observations stand out: First, Lumentum’s margin expansion is the most extreme on the list and the least likely to sustain at this rate, 1,730 basis points in twelve months reflects supply-side scarcity rather than a structural cost advantage. Second, Synopsys’s margin compression is the most temporary, integration costs from a major acquisition almost always reverse on a 12–24-month timeline, and the underlying business has not changed. Third, the Bitcoin-to-AI pivots are not really “margin compression” stories so much as “margin construction” stories, they are trying to build a positive-margin business out of what is currently a negative-gross-margin operation, and that is genuinely uncertain.

Which Companies Generate the Most Cash Flow, and Why?

Cash flow is the financial reality check on every other metric. A company can grow revenue rapidly, post strong earnings, and still burn cash; conversely, a slow-growing business can produce a torrent of cash that funds buybacks, dividends, and acquisitions. The cash flow profiles across the 21 companies vary by orders of magnitude.

Massive, consistent cash generators: Broadcom ($7.4B free cash flow in Q3 alone), TSMC (multi-billion-dollar quarterly FCF, funding the $100B+ U.S. expansion from internal cash), NVIDIA (data-center revenue at $40B+ per quarter at AI-accelerator margins translates into eye-watering FCF), Synopsys ($809M FCF in Q1 2026 even during integration), ASML (consistent multi-billion-euro annual FCF), and Lam Research (committing >85% of FCF to shareholder returns implies strong absolute generation). These companies have business models where most of revenue translates to cash, and capex requirements, while large in absolute terms for some, are well below operating cash flow.

Solid but more variable: Applied Materials (profitable, generating cash, but trajectory flattening), Micron ($299M+ implied FCF in good quarters but historically negative in cyclical troughs), AMD (now reliably FCF-positive after years of investment), Marvell (recently fortified by $2.5B auto unit sale, executing a $5B buyback), Celestica ($299M quarterly FCF on a clean capital structure), Lumentum (newly large FCF from operating margin surge, sustainability question open).

Currently break-even or modestly cash-flow-generative: Microchip (cash flow squeezed by the cyclical trough but balance sheet still serviceable), Intel (cash flow distorted by massive foundry capex; reports inconsistent profitability), Astera Labs (small absolute base; growing rapidly but capital intensity unclear).

Cash burners (negative free cash flow): Synaptics (-$54M FCF in Q1 2026, negative for 7 of last 8 quarters), Applied Digital (-$362M FCF in Q1 2026, accelerating), Core Scientific (consistently negative as they build HPC infrastructure), CoreWeave (massive capex commitments to fund $55B+ backlog), Nebius (closer to adjusted-EBITDA breakeven but still investing heavily), IREN (raising $3.6B in equity to fund the buildout). The Bitcoin pivots and the neoclouds are united by the same dynamic: they are deploying capital at a faster rate than they are generating it, and depending on either contracted revenue ramps or capital markets to bridge the gap.

What separates the strong cash generators from the burners is essentially business-model design. The cash generators are either software/IP businesses (Synopsys, ASML’s IP, NVIDIA’s CUDA-leveraged hardware) or oligopolistic hardware businesses earning premium prices (Broadcom, TSMC, Lam Research). The cash burners are either capital-intensive landlords (Bitcoin pivots, neoclouds) or businesses in transition that have not yet reached scale (Synaptics, Astera). Critically, cash burning is not inherently bad, all great businesses burn cash early in their lives, but it is a different financial profile that demands different valuation discipline. A 26x P/S multiple on a cash burner is not the same investment as a 56x P/E multiple on a cash compounder.

Which Companies Have the Highest ROIC, and Why?

Return on invested capital is essentially the question “how much profit does this business generate per dollar of capital tied up in it?” Companies with high ROIC are characterized by some combination of three things: high margins, low capital intensity (asset-light business models or efficient capital deployment), and pricing power that prevents margin erosion. The 21 companies fall into roughly four groups.

Tier 1, very high ROIC (typically 30%+ at peak): Synopsys and ASML. Both are software-and-IP-heavy businesses with structurally low capital intensity relative to earnings power. Synopsys’s 73.5% gross margin combined with very modest capex requirements (mostly R&D, which is expensed rather than capitalized) means almost every dollar of revenue translates into reinvestable cash. ASML, despite being a hardware business, has a similar profile because each EUV system represents enormous economic value (~$200M+ ASP) on a manageable installed capital base, and service revenue further sweetens the math.

Tier 2, high ROIC (typically 20-30%): TSMC, NVIDIA, Broadcom, Lam Research. TSMC is the unusual case here, normally a fab-heavy business would have far lower ROIC, but TSMC’s pricing power on leading-edge nodes and its scale advantages allow it to earn premium returns despite enormous capex. NVIDIA’s ROIC has surged on the back of AI accelerator pricing; the question is whether it sustains as competitors close in. Broadcom’s 26-37% net margin combined with disciplined capex (it is fabless) produces consistently high returns. Lam Research is targeting ~50% gross margins by 2028 and committing >85% of FCF to shareholder returns, both of which are signals of confident high-ROIC compounding.

Tier 3, moderate ROIC (typically 10-20% at peak): Applied Materials, Micron (peak), AMD, Marvell, Lumentum (currently above this range, but normalizing). These are real businesses earning real returns, but capital intensity, competitive dynamics, or cyclicality keep ROIC from sustaining at Tier-1 or Tier-2 levels. Lumentum’s current operating margin of 25.2% is genuinely high, but it reflects a temporary supply-demand imbalance rather than a structural cost-curve advantage; through-cycle ROIC is more likely in the 15-20% range.

Tier 4, low or negative ROIC: Celestica (single-digit net margin on a manufacturing capital base limits ROIC to low double-digits at best), Microchip (currently in the cyclical trough, structurally a Tier-3 business in better cycles), Synaptics (negative net margin), Intel (depressed by foundry buildout costs), CoreWeave and Nebius (capex-heavy, cash-burning), and the Bitcoin pivots (negative ROIC across the board). Importantly, low ROIC does not necessarily mean a bad investment, it means the math depends on revenue growth far outpacing capital deployment, which is exactly the bull case for the neoclouds and the Bitcoin pivots.

ROIC tracks moat strength and pricing power almost perfectly. The companies with the highest ROIC are the ones with structural monopolies or duopolies (ASML, Synopsys, TSMC, NVIDIA) plus the few with genuine pricing power without monopoly status (Broadcom, Lam Research). The companies with the lowest ROIC are the ones operating in commodity layers (contract manufacturing, GPU rental, power hosting) where competitive pressure squeezes margins. Investors looking for compounders should focus on Tier 1 and Tier 2; investors looking for asymmetric option-style returns should look at Tier 4, but with their eyes open about what they are buying.

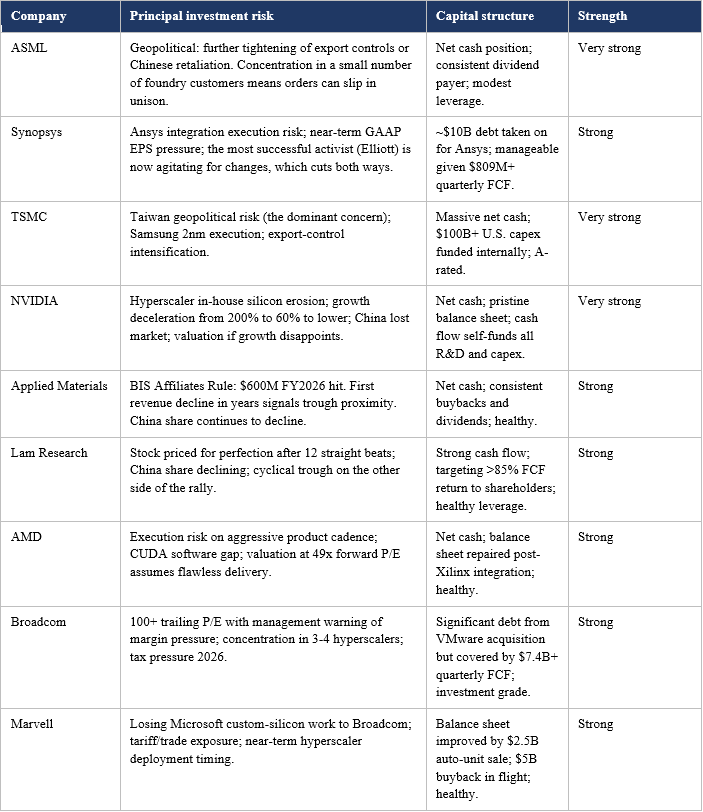

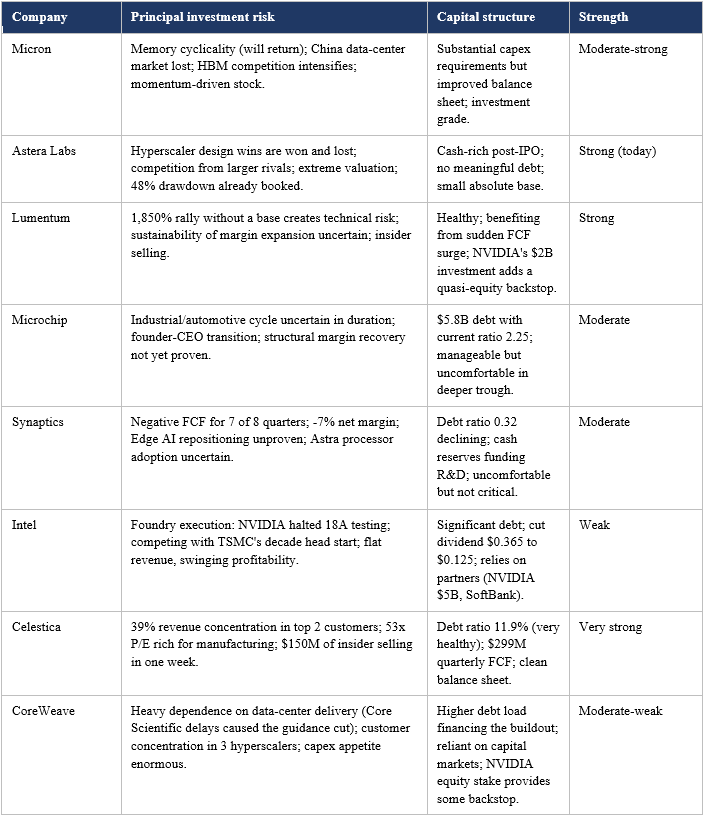

Investment Risks and Capital Structure Strength

Every company on this list has specific risks that an investor should be able to articulate before owning the stock. The catalog below summarizes the principal investment risk and the capital structure strength of each. “Capital structure strength” combines balance-sheet leverage, debt-service capacity, and the company’s ability to fund its capital plan from internal sources rather than capital markets, which becomes critical in a downturn.

Capital Structure: A Closer Look

Capital structure becomes the single most important variable in any downturn or industry-specific shock. The companies on this list separate cleanly into three groups based on their ability to weather adverse conditions:

Fortress balance sheets: ASML, TSMC, NVIDIA, Celestica, Synopsys, AMD, Lam Research and Applied Materials all hold substantial net cash or modest net debt that is comfortably covered by operating cash flow. Several (ASML, TSMC, NVIDIA) could fund their entire current capex program for multiple years from existing cash. Synopsys took on ~$10B of debt for the Ansys acquisition but the deal added a high-margin business that services the debt easily. These companies can play offense in a downturn, buying back stock, acquiring distressed competitors, or simply continuing to invest while everyone else cuts.

Adequate but not bulletproof: Broadcom carries significant debt from VMware but generates so much cash ($7.4B+ per quarter) that the leverage is comfortable. Marvell improved materially via the auto-unit divestiture. Micron has heavy capex but improved capital structure. Microchip has $5.8B of debt that is manageable in the current cycle but would become uncomfortable if the trough extends further. Synaptics is funding its repositioning from cash reserves rather than the income statement, a serviceable model that limits time available.

Stressed or aggressive: Intel, the neoclouds (CoreWeave moreso than Nebius), and especially the Bitcoin pivots all have capital structures that depend on continued access to capital markets and successful contract ramps. Intel has cut its dividend, taken in $5B from NVIDIA and announced a SoftBank deal precisely because it needs the capital. CoreWeave’s debt load financing the buildout creates real risk if data-center deliveries slip further. The Bitcoin pivots are the most stressed, IREN took on $2B+ in convertibles, Applied Digital’s debt ratio jumped from 17% to 29%, and all three are aggressively diluting equity. In a downturn, these companies would face the classic capital-intensive-startup problem: the cash needs do not stop just because the capital markets close.

The capital-structure tier maps directly onto the moat tier and the ROIC tier. This is not a coincidence: high-ROIC businesses with deep moats generate enough cash to maintain fortress balance sheets without needing external financing, which gives them the optionality to keep investing through cycles. Low-ROIC businesses with weak or unproven moats need external capital to grow, which makes them fragile precisely when external capital becomes scarce. The investor implication is that the top of this list is appropriate for any market regime; the bottom of the list requires a benign capital-markets environment to even survive, much less thrive.

A useful synthesis across all five questions in this section: Synopsys, ASML, TSMC, NVIDIA, Lam Research, Broadcom and AMD form a coherent shortlist of high-quality businesses, those with high ROIC, strong cash generation, expanding or stable margins (with Synopsys’s Ansys-driven compression as a temporary exception), durable moats and fortress balance sheets. Lumentum, Marvell, Micron and Celestica are second-tier, real businesses with real returns but more cyclicality, more competitive pressure, or more concentration risk. The neoclouds, the Bitcoin pivots and Intel are explicit bets that economic value will be created in the future on capital being deployed today, with capital structures that range from aggressive to dangerously stretched.

Common Threads Across the 21 Companies

Almost everyone is priced for perfection

With the partial exceptions of Synaptics, Microchip, Intel and (post-correction) Synopsys and NVIDIA itself, every company in this group trades at a multiple that already incorporates strong AI tailwinds. Broadcom at 100+ trailing P/E, Celestica at 53x for a manufacturer, Astera at extreme P/S, Nebius at 45x sales, Applied Digital at 26x sales while burning cash, these are not bargains. NVIDIA at 38.7x forward P/E is, paradoxically, one of the more reasonably-priced names in the group on traditional growth metrics, though the question is whether 60% growth is sustainable. The shared risk is multiple compression, not fundamental disappointment. Even good earnings can produce bad stock outcomes when expectations are this elevated, as Broadcom’s 15% post-earnings drop and NVIDIA’s own technical breakdown have demonstrated.

China is a single risk factor that affects most of the group

Twelve of these companies have explicit, material China exposure: NVIDIA, ASML, Applied Materials, Lam Research, TSMC, Intel, AMD, Broadcom, Marvell, Micron, Microchip and Synaptics. The U.S.-China tech decoupling is not a tail risk; it is a base-case business reality that is currently in the middle of intensifying. NVIDIA itself has stated that its competitive position has been harmed by export controls and that revenue from China has declined significantly. Applied Materials disclosed a $600M FY2026 hit from the BIS Affiliates Rule. Micron has effectively lost the Chinese data-center market. TSMC sits ninety miles from the People’s Republic. Investors who hold a basket of these stocks should understand they are holding a single, undiversified bet on how that policy environment evolves.

The bottleneck principle ties it all together

Demand for AI compute is essentially uncapped at current prices, the constraints are physical and capability-based. The companies that own the constrained resources command pricing power and earn economic rent. The companies queueing on the other side pay it. The further upstream the bottleneck, the more durable the rent extraction. This single principle explains most of the dispersion in valuation, margin profile, and stock-price performance across the 21 companies on this list.

Bottom Line

The most useful frame for thinking about these 21 companies is not which one will go up the most, but which combination of them, if any, you would want to own through a downturn. Synopsys (with its irreplaceable EDA software), the semiconductor equipment trio (ASML, Lam Research, Applied Materials), TSMC and Micron are the most fundamentally protected: even if AI capex slows, the world still needs leading-edge chips for everything else, and the equipment installed base, the EDA tool licenses, and the foundry capacity all generate revenue regardless. NVIDIA sits in a unique category, the dominant operating story of the past three years, but also the one whose growth is decelerating and whose customers are deliberately building alternatives. The neoclouds, Intel’s foundry pivot and the Bitcoin pivots, by contrast, are explicit bets on continued AI-specific capital deployment; they would be hit hardest by any pause in hyperscaler spending.

The question to ask of each name is therefore the same: what is this company actually selling, who has to buy it, and what would have to be true for that customer to stop buying? The further down the stack a company sits, the closer it is to the irreplaceable physical machinery, the proprietary IP, the design software that everyone has built their workflows around, the easier that question is to answer in its favor. The closer it is to the AI workload itself, the harder. NVIDIA, at the very top of the silicon stack, is the single name where the question matters most, because the answers given by Amazon, Google, Microsoft and Meta will largely determine the trajectory of the other twenty companies on this list.

Disclaimer

This report is provided strictly for informational purposes and does not constitute financial advice, an offer to sell, or a solicitation to purchase any securities. The author may hold a position in securities discussed. The analysis reflects independent research conducted without external compensation or existing business relationships with the mentioned entities. Please be advised that investing involves significant risk, and past performance is never a guarantee of future market results. Readers should conduct their own due diligence or consult a licensed professional before making any investment decisions based on this data. All information is sourced from public filings and is considered current only as of the date of 8th May 2026.

Join the Value & Momentum Portfolio

Unlock weekly Market Pulse and Macro Notes, high-conviction Momentum Plays, and institutional-level Deep Dives on AI Infra, SaaS & Defense. + Analysts Upgrades & Rising price targets + Insider & Institutional Buys + Sweep Calls above Ask + 2nd Derivative Analysis by Sector.

Get the edge you need, led by former investment banker Denis D. (Lazard, Rothschild), our research helps you stay ahead of the curve. Subscribe to start your analysis now - @X: Link, @Seeking Alpha: Link, @Spotify: Link, @Youtube: Link.